|

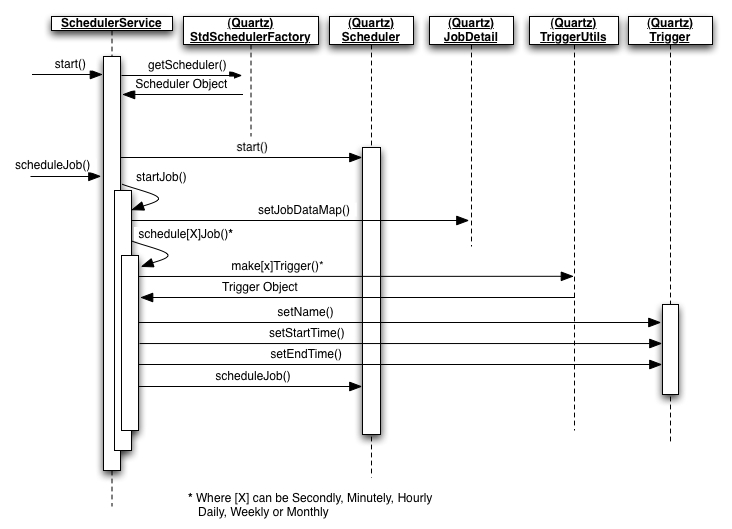

Implementations to handle job dependenciesĭepending on the use cases, you may try three different Airflow operators to handle job dependencies as shown in the following diagram. Since Apache Airflow doesn’t have a “Move Object” operator, we implemented Apache Airflow’s S3CopyObjectOperator and S3DeleteObjectOperator to move the S3 Object, so that an incoming file can be moved to a different folder to avoid repeated processing. We implemented Apache Airflow’s S3KeySensor as our S3 poller to respond to S3 events.

Although it delivers object storage on the customer’s premises, Amazon S3 is still a fully managed service and designed to provide high durability and redundancy. Amazon S3 is available on AWS Outposts as a new storage class called “S3 Outposts”. We used Amazon S3 for the storage of Airflow DAGs and data files. We used the open-source workflow management platform Apache Airflow to achieve the scheduling capabilities, including the abilities to run, retry, pause, kill, and override jobs to run concurrent jobs to define the dependency of jobs to view the job execution status and to allow values to be passed between tasks and the templating of jobs.Īs mentioned above, we have a use case of AWS Outposts deployment, so Amazon MWAA is not used.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed